|

|

Climate Change · Part One Climate Change 1 Syllabus 1.0 - Introduction 2.0 - The Earth's Natural Greenhouse Effect 3.0 - The Greenhouse Gases 4.0 - CO2 Emissions 5.0 - The Earth's Carbon Reservoirs 6.0 - Carbon Cycling: Some Examples 7.0 - Climate and Weather 8.0 - Global Wind Systems 9.0 - Clouds, Storms and Climates 10.0 - Global Ocean Circulation 11.0 - El Niño and the Southern Oscillation 12.0 Outlook for the Future · 12.1 - Introduction to Climate Change · 12.2 - Advances in Computer Modeling · 12.3 - Physics versus Fudge Factors Climate Change · Part Two Introduction to Astronomy Life in the Universe Glossary: Climate Change Glossary: Astronomy Glossary: Life in Universe |

Advances in Computer Modeling

How Are Climate Models Designed?

As we have learned, the activities of humanity have demonstrably changed the climate in the last two decades and have likely been changing it for the past several decades. The most important factor is the addition of greenhouse gases to the atmosphere, mainly from energy production (carbon dioxide) but also from animal farming and waste management (methane). Other factors are deforestation, air pollution, excess fertilization, soil degradation, and drastic interference in the water cycle on land. How will this great geophysical experiment shape the future? We would like to get some answers to this question Early Computer Models of Climate

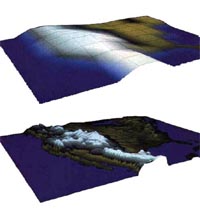

The first attempts at climate modeling were done in the 1960’s, making this a very young scientific field. In these early days, the climate system was represented in greatly simplified fashion, for example by the balance of heat radiation entering and exiting the Earth’s atmosphere as a function of latitude. But the complexity of models grew rapidly together with power of the computers that were running them, and as Dr. Richard Somerville of SIO likes to point out, one requirement of a useful model is that their computations should be fast enough to get ahead of the real climate changes! In other words, the complexity of the models is constrained by the computing power; our models need to be designed to give us answers in a reasonable amount of time, a factor that is limited by the speed of the computer. For instance, we could attempt to design a very complex climate model that takes into account the most intricate detail of atmospheric physics. However, if even the world’s fastest computer takes years to come up with a final answer to our computer model, we have defeated the purpose of making a model, which is supposed to give us relatively quick answers.In a computer model the distance between neighboring points describing conditions on the surface of Earth is referred to as the “resolution,” and it is this parameter that is most affected by computing power. Processes that are "below resolution" (such as the local convection that produces thunderstorms) must be simulated in some way so as to retain the functions they provide for heat transfer, evaporation and precipitation (more on this subject later in this lesson). In a manner analogous to the “resolution” of a computer monitor, the better resolution we have in our model (that is, the smaller the distance between points on our Earth), the more detailed the image of the Earth’s climate we see. However, such high resolution climate models require high speed computers. Using such simulations, we can test the responses of the models by entering disturbing factors into their mathematical machinery and observing the results. The technical term for such experiments is "sensitivity analysis." The building of "scenarios" of the future is a special case of such analysis, which provides hints at what might happen in the real world, according to the various models, run with a range of plausible input scenarios. When generating "scenarios," we pool all our experiences and knowledge in the different sciences bearing on climate and produce a best guess for the consequences resulting from a particular political strategy or economic development using the most powerful computers. To demonstrate the ramifications of human-induced climate change, we should be able to predict what happens to climate with and without continued input of manmade trace gases and to check whether differences in the predictions are sufficiently great to impact life in the real world. |

back to top |

| © 2002 All Rights Reserved - University of California, San Diego |